OSC

Up until now, I had avoided OSC because getting synths that understand it setup correctly was very inconsistent, if not difficult. At the time, I was wringing all I could out of MIDI, or rather unhappily building internal audio engines - knowing that the result would not be as good as a battle hardened synth. I have tinkered with Pd (hard to do non-trivial programming in brute-force visual spaghetti code), SuperCollider (a rather horrible language, but more complete programming capability), and ChucK (a little weird at first, but a great language - but performance is not necessarily great). The other main issue was that before I found myself on an x86 tablet, the GPL license for SuperCollider and ChucK were problematic. On iOS, you end up having to bake everything into a monolithic app.

But I really wanted to offload all signal processing into one of these environments somehow, and found out that ChucK does OSC really nicely. It's pointless (and ridiculous) for me to spend my time implementing an entire windowing system because the native (or just common) toolkits have too much latency, and it's just stupid for me to try to compete with the greatest synthesizers out there. So, I offloaded absolutely everything that's not in my area of expertise or interest. The synthesizer behind it?

Here is a ChucK server that implements a 10 voice OSC synth with a timbre on the y axis (implemented a few minutes after the video above). It's just sending tuples of (voice, amplitude, frequency, timbre):

//chucksrv.ck

//run like: chuck chucksrv.ck

"/rjf,ifff" => string oscAddress;

1973 => int oscPort;

10 => int vMax;

JCRev reverb;

SawOsc voices[vMax];

SawOsc voicesHi[vMax];

for( 0 => int vIdx; vIdx < vMax; vIdx++) {

voices[vIdx] => reverb;

voicesHi[vIdx] => reverb;

0 => voices[vIdx].gain;

440 => voices[vIdx].freq;

0 => voicesHi[vIdx].gain;

880 => voicesHi[vIdx].freq;

}

0.6 => reverb.mix;

reverb => dac;

OscRecv recv;

oscPort => recv.port;

recv.listen();

recv.event( oscAddress ) @=> OscEvent oe;

while( oe => now ) {

if( oe.nextMsg() ) {

oe.getInt() => int voice;

oe.getFloat() => float gain; 0.5 * oe.getFloat() => float freq => voices[voice].freq;

2 * freq => voicesHi[voice].freq;

oe.getFloat() => float timbre;

timbre * 0.025125 * gain => voices[voice].gain;

(1 - timbre) * 0.0525 * gain => voicesHi[voice].gain;

//<<< "voice=", voice, ",vol=", gain, ",freq=", freq, ",timbre", timbre >>>;

}

//me.yield();

}

while( true ) {

1::second => now;

me.yield();

}

The two main things about ChucK you need to decipher it are that assignment is syntactically backwards, "rhs => type lhs" rather than the traditional C "type lhs = rhs"; where the "@=>" operator is just assignment by reference. The other main thing is the special variable "now". Usually "now" is a read-only value. But in ChucK, you setup a graph of oscillators and advance time forward explicitly (ie: move forward by 130ms, or move time forward until an event comes in). So, in this server, I just made an array of oscillators such that incoming messages will use one per finger. When the messages come in, they select the voice and set volume, frequency, and timbre. It really is that simple. Here is a client that generates a random orchestra that sounds like microtonal violinists going kind of crazy (almost all of the code is orthogonal to the task of simply understanding what it does; as the checks against random variables just create reasonable distributions for jumping around by fifths, octaves, and along scales):

//chuckcli.ck

//run like: chuck chuckcli.ck

"/rjf,ifff" => string oscAddress;

1973 => int oscPort;

10 => int vMax;

"127.0.0.1" => string oscHost;

OscSend xmit;

float freq[vMax];

float vol[vMax];

for( 0 => int vIdx; vIdx < vMax; vIdx++ ) {

220 => freq[vIdx];

0.0 => vol[vIdx];

}

[1.0, 9.0/8, 6.0/5, 4.0/3, 3.0/2] @=> float baseNotes[];

float baseShift[vMax];

int noteIndex[vMax];

for( 0 => int vIdx; vIdx < vMax; vIdx++ ) {

1.0 => baseShift[vIdx];

0 => noteIndex[vIdx];

}

xmit.setHost(oscHost,oscPort);

while( true )

{

Std.rand2(0,vMax-1) => int voice;

//(((Std.rand2(0,255) / 256.0)*1.0-0.5)*0.1*freq[voice] + freq[voice]) => freq[voice];

((1.0+((Std.rand2(0,255) / 256.0)*1.0-0.5)*0.0025)*baseShift[voice]) => baseShift[voice];

//Maybe follow leader

if(Std.rand2(0,256) < 1) {

0 => noteIndex[1];

noteIndex[1] => noteIndex[voice];

baseShift[1] => baseShift[voice];

}

if(Std.rand2(0,256) < 1) {

0 => noteIndex[0];

noteIndex[0] => noteIndex[voice];

baseShift[0] => baseShift[voice];

}

//Stay in range

if(vol[voice] < 0) {

0 => vol[voice];

}

if(vol[voice] > 1) {

1 => vol[voice];

}

if(baseShift[voice] < 1) {

baseShift[voice] * 2.0 => baseShift[voice];

}

if(baseShift[voice] > 32) {

baseShift[voice] * 0.5 => baseShift[voice];

}

//Maybe silent

if(Std.rand2(0,64) < 1) {

0 => vol[voice];

}

if(Std.rand2(0,3) < 2) {

0.01 +=> vol[voice];

}

if(Std.rand2(0,1) < 1) {

0.005 -=> vol[voice];

}

//Octave jumps

if(Std.rand2(0,4) < 1) {

baseShift[voice] * 2.0 => baseShift[voice];

}

if(Std.rand2(0,4) < 1) {

baseShift[voice] * 0.5 => baseShift[voice];

}

//Fifth jumps

if(Std.rand2(0,256) < 1) {

baseShift[voice] * 3.0/2 => baseShift[voice];

}

if(Std.rand2(0,256) < 1) {

baseShift[voice] * 2.0/3 => baseShift[voice];

}

//Walk scale

if(Std.rand2(0,8) < 1) {

0 => noteIndex[voice];

}

if(Std.rand2(0,16) < 1) {

(noteIndex[voice] + 1) % 5 => noteIndex[voice];

}

if(Std.rand2(0,16) < 1) {

(noteIndex[voice] - 1 + vMax) % 5 => noteIndex[voice];

}

//Make freq

27.5 * baseShift[voice] * baseNotes[noteIndex[voice]] => freq[voice];

xmit.startMsg(oscAddress);

xmit.addInt(voice);

xmit.addFloat(vol[voice]);

xmit.addFloat(freq[voice]);

xmit.addFloat(1.0);

35::ms +=> now;

<<< "voice=", voice, ",vol=", vol[voice], ",freq=", freq[voice] >>>;

}

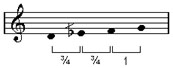

What is important is the xmit code. So, when I went to implement OSC manually in my windows instrument, I had to work out a few nits in the spec to get things to work. The main thing is that OSC messages are just as simple as can be imagined (although a bit inefficient compared to MIDI). The first thing to know is that all elements of OSC messages must be padded to be multiples of 4 bytes. In combination with Writer stream APIs that don't null terminate for you, you just need to be careful to pad so that there is at least a null terminator with up to 3 extra useless null bytes to pad the data. So, OSC is like a remote procedure call mechanism where the function name is an URL in ASCII, followed by a function signature in ASCII, followed by the binary data (big-endian 32bit ints and floats, etc).

"/foo" //4 bytes

\0 \0 0 \0 //this means we need 4 null terminator bytes

",if" //method signatures start with comma, followed by i for int, f for float (32bit bigendian)

\0 //there were 3 bytes in the string, so 1 null terminator makes it 4 byte boundary

[1234] //literally, a 4 byte 32-bit big endian int, as the signature stated

[2.3434] //literally, a 4 byte 32-bit big endian float, as signature stated

There is no other messaging ceremony required. The set of methods defined is up to the client and server to agree on.

Note that the method signature and null terminators tell the parser exactly how many bytes to expect. Note also, that the major synths generally use UDP(!!!). So, you have to write out things as if messages are randomly dropped (they are. they will be.). For instance, you will get a stuck note if you only sent volume zero once to turn off voice, or would have leaks if you expected the other end to reliably follow all of the messages. So, when you design your messages in OSC, you should make heartbeats double as mechanisms to explicitly zero out notes that are assumed to be dead (ie: infrequently send 'note off' to all voices to cover for packet losses). If you think about it, this means that even though OSC is agnostic about the transport, in practice you will need to at least design the protocol as if UDP is the transport.

OSC Ambiguity

So, the protocol defines little more than a verbose RPC syntax, where the names look like little file paths (where parents are the scope and bottom most file in the directory is the method name to invoke). You can make a dead simple instrument that only sends tuples to manipulate the basic voice spline changes (voiceid, volume, frequency, timbre). It will work, and will be a million times easier than trying to do this in MIDI. Everything, including units is up to you (literal hz frequencies? floating point 'midi numbers' which are log frequencies?, etc.). That's where the madness comes in.If you use OSC, you must be prepared to ship with a recommendation for a freeware synth (ie: ChucK or SuperCollider), instructions on exactly how to setup and run them, and an actual protocol specification for your synth (because some synth you don't have doesn't know your custom protocol). This is the biggest stumbling block to shipping with OSC over MIDI. But I have finally had enough of trying to make MIDI work for these kinds of instruments. So, here is an OSC version. It really is a custom protocol. The OSC "specification" really just defines a syntax (like Json, etc). Implementing it means nothing for compatibility, as it's just one layer of compatibility. But if you plan on using SuperCollider or ChucK for the synth, it's a pretty good choice. You can scale OSC implementations down to implement exactly and only what you need.